Built for Kids. Applied to Everyone: Arizona's Push to Turn Parents into Gatekeepers and Platforms into Enforcers

When a striker feature is used to introduce large-scale policy shifts affecting surveillance systems, digital infrastructure, or constitutional rights — the process itself becomes part of the story.

Yesterday, all eyes were on the privacy bill in the Arizona Senate. Sen. Jake Hoffman’s striker to HB2917 advanced with a 6–1 Do Pass recommendation. It’s a landmark proposal that, on paper, would impose one of the most restrictive frameworks on government vehicle surveillance in Arizona.

But while attention was fixed on that vote, something else moved a few doors down and almost no one noticed.

In a separate room, lawmakers advanced a striker that could carry its own implications for how digital systems operate, how user data is handled, and how oversight is applied.

“This is about kids. We have to protect the children,” Rep. Michael Carbone said as he presented the striker to HB2991.

But this wasn’t a simple amendment.

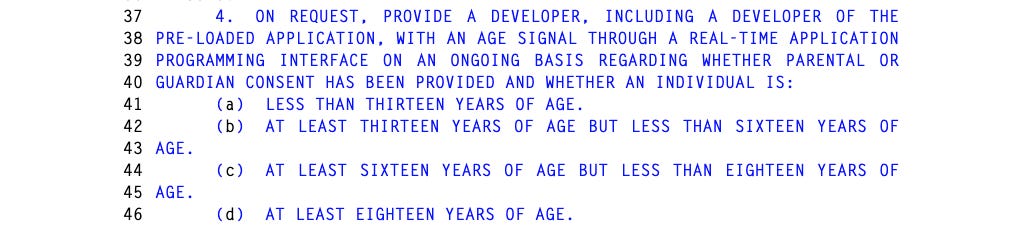

The language, authored by Sen. Shawnna Bolick (R-LD2) does not modify HB2991. It creates a hierarchy of trust in how age is determined, allowing developers to defer to age signals provided by covered companies unless they can meet a “clear and convincing” standard to override it.

The measure advanced on a 4–2 vote.

And while Arizona lawmakers moved it forward, some of the clearest descriptions of how the proposal would function did not come from the hearing room.

They came from outside it.

“This didn’t just amend the bill. It rewrote it,” said Julie Barrett, a Florida-based internet privacy advocate who has been tracking similar proposals across the country.

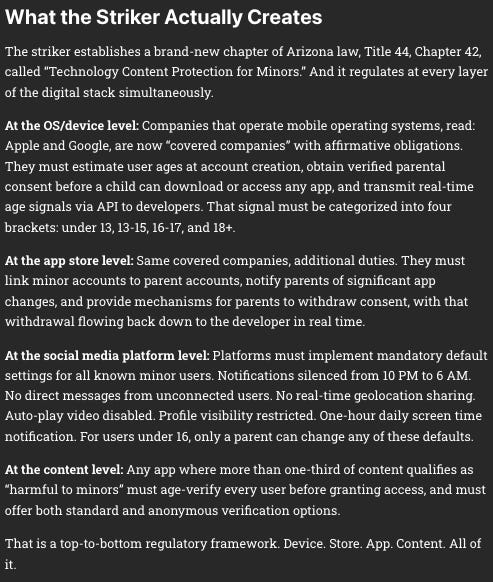

As written, the striker would require covered companies, developers, and social media platforms to implement age verification measures and related safeguards for minors.

On its face, the pitch is simple: protect children online.

But according to Barrett’s analysis, the system required to do that doesn’t just touch minors. It necessarily expands outward across every user. Because platforms cannot identify children without first determining who isn’t one.

That concern isn’t isolated.

It’s echoed by the Computer & Communications Industry Association, a Washington, D.C.–based group representing a broad cross-section of tech and communications companies.

The organization says it shares the stated goal behind HB2991 making the internet safer for children.

But CCIA opposes the bill.

According to spokesperson Aodhan Downey, the concerns aren’t theoretical. They’re structural.

“First, the bill faces steep constitutional hurdles by requiring age verification… and consent as a condition to download apps,” Downey said.

“HB 2991 essentially cards every Arizonan at the digital door.”

That framing cuts directly to the issue raised in the hearing but never fully answered: how do you verify minors without building a system that evaluates everyone?

Downey also pointed to downstream effects… not just for users, but for businesses.

The bill includes a private right of action, opening the door for lawsuits. In practice, he warns, that could expose smaller Arizona developers to legal risk over technical compliance issues.

“[It] could lead the way to frivolous lawsuits against small Arizona developers over minor technicalities,” he said.

Taken together, the opposition outlines a different version of the bill than the one presented in the hearing.

But the central question remained largely unresolved: how, exactly, does this system work?

Sen. Mitzi Epstein (D-LD12) pressed the central question.

Rep. Carbone pointed to parental controls set during device setup and acknowledged the complexity, describing systems involving algorithms and signaling between app stores, apps, and users.

“It’s a very complex and deep conversation,” he said.

According to internet privacy advocate Julie Barrett, the bill does not explicitly require every Arizonan to submit identification. But she argues it may not need to.

“Apple and Google don’t get to verify just the minors. They have to verify everyone to know who the minors are. And that verification data, even if categorized and not directly tied to identity, creates exactly the kind of data architecture that becomes a target.”

By requiring companies to determine age categories for all users — both new and existing — and transmit those signals across platforms, the framework could effectively require age verification at a system-wide level.

Barrett explained it this way: major platforms would need a method to distinguish minors from adults across their entire user base.

Even if that data is categorized rather than directly tied to identity, it still creates a new layer of infrastructure. One that could become a target.

“To the bill’s credit, it does include privacy protections for the anonymous age verification pathway: third-party verifiers cannot retain personal identifying information after verification, cannot use it for any other purpose, and must protect it with reasonable security practices. But the anonymous pathway is optional, not required, users can also choose “standard” verification, which carries no such restrictions. And the bill is entirely silent on what covered companies can do with the age data they collect at the OS level.

The infrastructure gets built either way.”

Julie Barrett | Substack | March 26, 2026

Minors Have First Amendment Rights, Too

The Constitution isn’t activated by a birthday.

According to Aodhan Downey of the Computer & Communications Industry Association, minors themselves hold First Amendment protections, and legislation cannot simply delegate the role of gatekeeper to parents while requiring platforms to enforce it at scale.

“This risks restricting access for everyone — adults and teens alike — to protected speech, including news, religious text, and educational tools,” Downey said.

He pointed to recent federal court rulings, including in Texas, where similar age-verification mandates have been blocked on First Amendment grounds.

Courts, he noted, have drawn a clear line: the state cannot reduce the entire adult population to accessing only what is deemed appropriate for children.

Nor can it sidestep that limitation by shifting responsibility.

In other words, the constitutional issue isn’t just about protecting kids.

It’s about what happens when that protection is built on a system that filters access for everyone.

Jen’s Two Cents: “The Striker Tactic”

Before getting into what this bill does, it’s important to understand how it got here.

This is something Julie Barrett laid out clearly in her March 26th Substack, and it’s a piece of the process that doesn’t always get the attention it should.

Barrett notes when a bill like HB 2991 passes the House 44–6, it has gone through hearings, public testimony, and debate. People had a chance to weigh in. There’s a record of how it evolved.

But a striker changes that.

Arizona’s own legislative guidance states that strikers take “a bill that is still “alive,” gutting its original language, and replacing it with all new content, even if it has nothing to do with the original bill.”

And that new framework moves forward without going through that same level of scrutiny. As Barrett explains, this isn’t a rare workaround. It’s part of how the system operates.

Barrett notes the 2026 session alone, dozens of strikers were proposed and adopted by both parties.

When that feature is used to introduce large-scale policy shifts affecting surveillance systems, digital infrastructure, or constitutional rights — the process itself becomes part of the story.